Optimizing Google My Business for Hyper-Local SEO in 2025

In the rapidly evolving world of digital marketing, Local SEO in 2025 is more important than ever for small businesses. With increasing competition and the rise of mobile search, Optimizing Google My Business (GMB) has become a cornerstone for hyper-local SEO strategies. As search engines prioritize local intent, your Google My Business profile can be a game-changer for attracting local customers. In this blog, we’ll dive into how Optimizing Google My Business can help you stay ahead of the competition and dominate Local SEO in 2025.

What is Local SEO in 2025?

Before we delve into Optimizing Google My Business, let’s first understand what Local SEO in 2025 entails. Local SEO is all about improving your online visibility in a specific geographic area. In 2025, this means making sure your business appears in local search results when potential customers search for services or products near them. As mobile searches like “near me” continue to rise, Local SEO in 2025 will focus more on hyper-local strategies, ensuring that your business is easily found by nearby customers.

Why Optimizing Google My Business is Crucial for Local SEO in 2025

Google My Business is a free tool provided by Google that allows you to manage how your business appears in local search results, including Google Search and Google Maps. Optimizing Google My Business ensures that your business profile is complete, accurate, and engaging, which directly impacts your visibility in local search results. Since Google increasingly favors businesses that provide a rich, detailed, and optimized GMB profile, it’s clear that Optimizing Google My Business is key to succeeding with Local SEO in 2025.

Let’s explore the essential steps to ensure your business is ready for Local SEO in 2025 by Optimizing Google My Business.

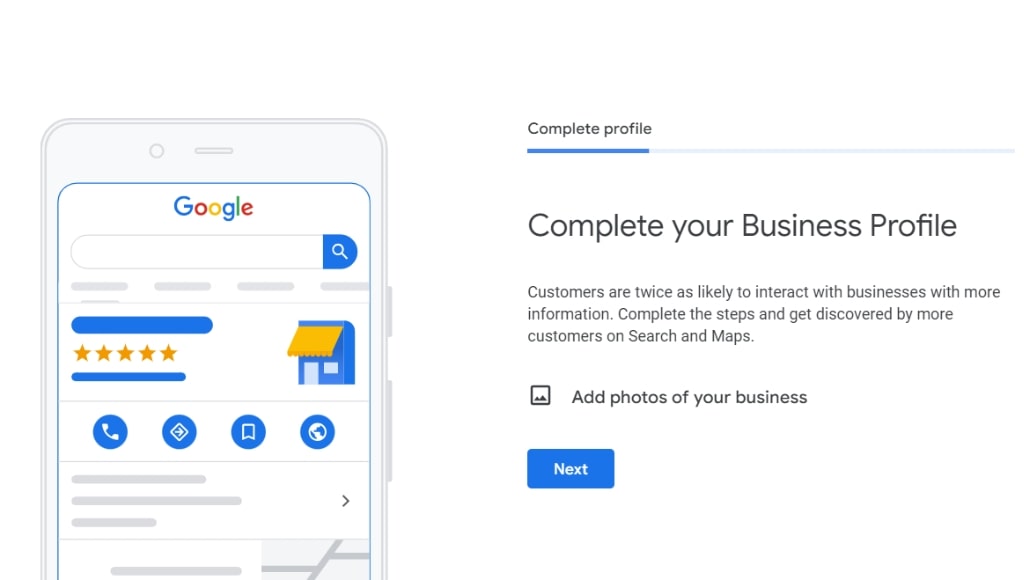

1. Optimizing Google My Business: Complete Your Profile

The first step in Optimizing Google My Business is to ensure that your profile is 100% complete. Businesses with complete profiles are twice as likely to be considered reputable by customers. For Local SEO in 2025, having an incomplete profile could result in your business being overlooked by potential customers.

Here’s what you need to include when Optimizing Google My Business:

- Business Name: Ensure it is accurate and consistent across all platforms.

- Address: Make sure your address is correct and formatted properly to avoid confusion in local searches.

- Phone Number: Use a local phone number to improve trust and relevance for Local SEO in 2025.

- Business Category: Select the most appropriate category for your business.

- Website URL: Link to your website or relevant landing page.

- Business Hours: Keep your hours updated, especially during holidays or special occasions.

When Optimizing Google My Business, it’s crucial to keep this information consistent across all online platforms to strengthen your local SEO efforts.

2. Optimizing Google My Business: Add High-Quality Photos

Visual content is vital for Optimizing Google My Business and plays a significant role in how customers perceive your brand. Profiles with photos receive 42% more requests for directions and 35% more website clicks. For Local SEO in 2025, adding high-quality, relevant images will help attract local customers and encourage engagement.

When Optimizing Google My Business, upload a variety of photos that showcase:

- Your storefront or business location.

- The interior of your business.

- Products or services you offer.

- Your team or staff members (which humanizes your business).

These images not only help users learn more about your business but also improve your local rankings, which is essential for Local SEO in 2025.

3. Optimizing Google My Business: Gather and Respond to Reviews

Customer reviews are critical for Local SEO in 2025. Businesses with positive reviews and high ratings tend to rank higher in local search results. Optimizing Google My Business involves not only gathering reviews but also responding to them—whether they are positive or negative.

Here’s how reviews contribute to Local SEO in 2025:

- Positive reviews signal trust and credibility to both users and search engines.

- Responding to reviews shows potential customers that you care about their feedback.

- Google considers review quality, quantity, and frequency when ranking businesses for local searches.

To succeed in Local SEO in 2025, make it a priority to encourage satisfied customers to leave reviews and always take the time to thank them. This effort in Optimizing Google My Business can significantly boost your local search visibility.

4. Optimizing Google My Business: Leverage Google Posts

Google Posts is a feature that allows businesses to publish updates, offers, events, and more directly to their GMB profile. Optimizing Google My Business by utilizing Google Posts helps you keep your profile fresh and engaging, which can improve your local search rankings.

For Local SEO in 2025, here’s how you can use Google Posts effectively:

- Announce sales, promotions, or new product launches.

- Share updates about business hours or services.

- Highlight special events happening at your location.

By consistently using Google Posts, you can maintain an active presence, which is beneficial for Local SEO in 2025. It keeps your business top of mind for customers and shows Google that you’re engaged with your audience.

5. Optimizing Google My Business: Utilize Attributes and Services

Google My Business allows you to list specific attributes and services related to your business. These attributes give potential customers a better understanding of what you offer and improve your chances of ranking higher in local searches. For Local SEO in 2025, Optimizing Google My Business by using relevant attributes is essential.

Here’s how attributes can help with Local SEO in 2025:

- Highlight important features, like “wheelchair accessibility” or “free Wi-Fi.”

- Specify if your business caters to certain groups, such as “LGBTQ+ friendly” or “pet-friendly.”

Accurately representing your business through attributes enhances your profile, making it more likely to appear in relevant local searches.

6. Optimizing Google My Business: Monitor and Update Your Information Regularly

For Local SEO in 2025, one of the most important aspects of Optimizing Google My Business is ensuring that your information is always up-to-date. Incorrect business information can lead to lost customers and lower search rankings.

Make it a habit to:

- Regularly update your business hours, especially during holidays.

- Ensure your contact information remains accurate.

- Add new photos and Google Posts to keep your profile fresh.

Keeping your GMB profile updated helps maintain visibility and credibility, both of which are essential for succeeding with Local SEO in 2025.

Conclusion: Mastering Local SEO in 2025 by Optimizing Google My Business

As we approach Local SEO in 2025, businesses that focus on Optimizing Google My Business will have a significant advantage in attracting local customers. From completing your profile to leveraging reviews and Google Posts, Optimizing Google My Business ensures that your business stays visible and relevant in hyper-local searches.

By focusing on these strategies, your business will be well-prepared to thrive in the competitive landscape of Local SEO in 2025. Keep your profile accurate, engage with your audience, and regularly update your content to maximize your local search presence. The future of Local SEO in 2025 is local, and Optimizing Google My Business is the key to staying ahead.

FAQs (Frequently Asked Questions)

How can I optimize my Google My Business listing for 2025?

To optimize your GMB listing, make sure all business details (name, address, phone number) are accurate. Use relevant keywords in your business description and select the correct categories. Regularly upload high-quality photos, gather reviews, and respond to them. Stay active by posting updates or promotions, as fresh content helps with ranking.

Why are reviews important for hyper-local SEO?

Reviews build trust and increase your local ranking. The more positive reviews you have, the more likely you’ll rank higher in search results. Encourage satisfied customers to leave reviews, and always reply—whether positive or negative. Engaging with reviews shows Google that your business is active and credible.

How can I use GMB posts to improve my local ranking?

GMB posts allow you to share updates, offers, and events. Posting regularly keeps your listing fresh and signals to Google that your business is active. Use local keywords in your posts, and add images to make them more engaging. Timely, relevant content can help attract nearby customers and boost your visibility.

Can Google My Business help me appear in voice searches?

Yes! Many voice searches focus on local results like "near me" queries. Ensure your GMB profile is fully optimized with correct contact info, operating hours, and relevant keywords. A well-maintained listing increases your chances of being featured in voice search results, helping potential customers find you quickly.